MOTOMAN NEXT: A New Generation of Industrial Robots Combining AI, Vision, and Unified Control

Yaskawa Unveils a New Generation of Industrial Robots

By unveiling its MOTOMAN NEXT platform, Yaskawa marks an important milestone in industrial automation. The Japanese company, already well established in robotics and automation, introduces an architecture designed to bridge two worlds long kept apart: traditional industrial automation (OT) and advanced digital technologies (IT).

The goal? To make robots capable not only of executing tasks, but also of observing, understanding, and adapting in a global context marked by labor shortages and the rise of complex tasks demanding automation.

An All-in-One Platform Unifying Hardware, Software, and Embedded Intelligence

According to the press release, MOTOMAN NEXT is presented as a complete solution in which “robot, controller, software, and engineering tools” are grouped into a single ecosystem

The core of the system relies on two main units:

-

RCU (Robot Control Unit): the traditional robot control unit.

-

ACU (Autonomous Control Unit): a new module dedicated to intelligent functions, based on NVIDIA Jetson Orin NX-Edge, integrating CPU + GPU, running Linux with Docker support.

This hybrid architecture paves the way for robotics that depends less on external computers, by integrating directly into the controller functions usually assigned to IT stations: image processing, AI, vision, planning, sensor management…

A rare approach in an industry typically fragmented between PLC logic and PC-based software.

Yaskawa also provides pre-installed APIs for motion control, planning, 2D/3D vision, force control, and image processing via HALCON ©.

A ROS2 interface connected via gRPC further opens the platform to the open-source robotics community.

With MOTOMAN NEXT, Yaskawa is no

With MOTOMAN NEXT, Yaskawa is no

longer just programming robots: it is

teaching them to perceive, understand,

and adapt.

Toward a Fusion of OT and IT Worlds

A section of the press release highlights a major problem in modern industrial robotics: the gap between the languages, tools, and programming philosophies of OT engineers and IT developers.

OT engineers work with PLCs, while IT developers use high-level code (Python, C++), often generated on a PC then injected into the robot through interfaces that can be limited or prone to latency.

MOTOMAN NEXT attempts to bridge this gap by bringing these logics together in a single controller capable of executing standard Docker modules while optimizing classic industrial robotics functions (kinematics, speeds, singularities) for Yaskawa hardware.

This integration avoids historical issues such as:

- Complex waypoints

- Unstable dynamic behavior

- Dependency on external PCs

- Difficult integration of vision or sensors

The idea is to offer IT developers the experience of a seasoned robot programmer while ensuring that advanced applications vision, AI, perception run directly at the controller level.

Robots That Perceive, Understand, and Produce

This change of methodology is described as a transition from the paradigm “Code and produce” to “Perceive and produce”.

Traditional robots operate in deterministic environments, with standardized parts and preprogrammed sequences. But when variability increases formats, batch sizes, operation order classical programming reaches its limits.

With the combination of sensors + AI, MOTOMAN NEXT targets applications previously reserved for human workers:

- Flexible assembly

- Sorting and handling of unstructured flows

- Variable loading/unloading

- Random cleaning and manipulation

- Harvesting and packaging in changing environments

Potentially impacted sectors go far beyond heavy industry: logistics, healthcare, construction, food service, recycling all industries currently suffering from labor shortages.

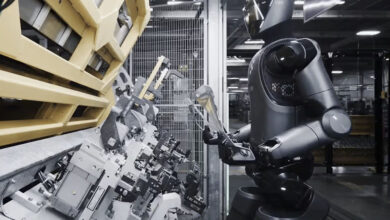

A New Line of Industrial Robots and Cobots

The MOTOMAN NEXT line NEX series covers payloads from 4 kg to 35 kg, with high-inertia servomotors designed to ensure perfect alignment between the digital model and real operation, facilitating simulation and virtual optimization.

Yaskawa also introduces new cobots (NHC series: 12 and 30 kg), equipped with an embedded RGB-D camera enabling direct perception from the robot and adaptive reactions in real time.

This “bodycam” opens the door to more natural interactions between robot and environment.

Digital Twin and Advanced Engineering

The MOTOMAN NEXT package includes the YNX Robot Simulator, a professional simulation tool enabling:

- Virtual cell creation

- Trajectory testing

- AI scenario validation

- Optimization before real deployment

This digital twin is key to reducing development risks and supporting the industrialization of intelligent robotic applications.

For advanced teams, Yaskawa also announces official support for NVIDIA’s tools: Isaac Sim™ and Cumotion.

Robotic autonomy is entering a new era

Robotic autonomy is entering a new era

where the robot is no longer dependent

on an external PC to think.

A Simplified Operator Interface

On the operator side, Yaskawa focuses on continuity: the Android Smart Pendant tablet remains the main interface. Sequences can be created in block-based language using icons representing functions (planning, vision recognition…), making programming more intuitive.

The platform is also prepared for next-gen LLM-based interactions: voice commands, gestures, AR (augmented reality) assistance.

Automatic Trajectory Planning: A Key Feature

Among the highlighted technical features, automatic collision-free trajectory planning (Path Planning) plays a central role.

A simple “MOVAUTO” command would allow the robot to move from point A to point B while automatically avoiding surrounding obstacles.

This planning relies on 3D modeling:

- Static: cell model

- Dynamic: real-time updates from the camera

This is essential for AI applications and evolving Industry 4.0 environments.

A Strategy Based on Partnerships and Real-World Use Cases

Yaskawa emphasizes that the success of AI/robotics projects depends on close cooperation between:

- End users

- Integrators

- Technology partners

- Yaskawa

The goal is to go beyond prototypes and achieve solutions that are fully industrialized and capable of continuous production.

A Significant Breakthrough in a Changing Robotics Landscape

With MOTOMAN NEXT, Yaskawa is not simply launching a new robot line: it is proposing a complete re-architecture of the robotics development cycle.

The OT/IT fusion, native integration of AI and vision, advanced simulation, embedded perception, and intelligent planning address today’s challenges: automating complex tasks, making robots more autonomous, and compensating for labor shortages across many sectors.

If the platform delivers on its promises in real-world deployments, it could redefine industrial robotics standards where the boundary between mechanical execution and software intelligence is progressively disappearing.

FAQ – Yaskawa MOTOMAN NEXT

2. How does MOTOMAN NEXT differ from traditional robot controllers?

Unlike conventional controllers focused solely on execution, MOTOMAN NEXT integrates an intelligent unit (ACU) based on NVIDIA Jetson Orin NX-Edge, enabling advanced functions such as vision processing, image analysis, embedded AI, and intelligent planning directly within the controller.

3. What problems does the platform aim to solve?

Yaskawa seeks to bridge the gap between OT (industrial automation) systems and IT (advanced software applications). The platform simplifies the integration of vision, sensors, and AI while eliminating reliance on external PCs and reducing issues like latency or unstable dynamic behavior.

4. What types of applications become possible thanks to this new architecture?

The combination of AI and onboard perception enables automation of tasks previously performed only by humans: flexible assembly, unstructured sorting, random handling, variable flow management, adaptive logistics, and operations in food processing, construction, healthcare, or recycling.

5. What new robots were announced with MOTOMAN NEXT?

Yaskawa introduced the NEX series, covering payloads from 4 to 35 kg, as well as new NHC-series cobots (12 and 30 kg) equipped with an integrated RGB-D camera. These robots offer improved consistency between digital models and real behavior, making simulation and programming more efficient.

6. How does the platform facilitate engineering and production deployment?

MOTOMAN NEXT includes a digital twin through the YNX Robot Simulator, enabling virtual cell creation, trajectory testing, AI scenario validation, and optimization before deployment. Compatibility with Isaac Sim and Cumotion further enhances advanced simulation capabilities.

7. What key improvements does the platform offer to users and operators?

Operators benefit from a simplified Smart Pendant interface, block-based programming tools, and modern interaction methods such as voice control, gestures, and augmented reality. Automatic trajectory planning with obstacle avoidance also improves safety and operational smoothness.