What is Physical AI in Robotics?

Artificial intelligence has established itself as a driver of digital transformation. But until recently, AI remained primarily software-based: data analysis, image recognition, conversational assistants, recommendation systems

In 2026, a new milestone is reached: AI is moving out of screens to act in the real world. This marks the emergence of what is now called physical AI.

Definition: What is Physical AI?

Physical AI refers to artificial intelligence systems capable of perceiving, deciding, and acting in a real, material environment in real time. Unlike purely software-based AI, it is directly connected to sensors, actuators, and physical machines.

In robotics, this means AI no longer just analyzes or predicts:

it directly controls movements, adapts its behavior to the environment, corrects its actions, and interacts with humans, objects, or other machines.

We then speak of a complete loop:

-

Perception (vision, sensors, signals)

-

Understanding (AI models)

-

Decision (reasoning, arbitration)

-

Action (motors, arms, locomotion)

-

Continuous feedback

Physical AI no longer just

calculates it acts in the

real world.

How Does Physical AI Change Robotics?

For a long time, industrial robots operated according to deterministic programs: a specific task, a fixed scenario, a controlled environment.

Physical AI introduces a major shift: dynamic adaptation.

A robot equipped with physical AI can:

-

Handle unstructured environments

-

Adapt to unforeseen variations

-

Learn from mistakes

-

Adjust movements in real time

-

Cooperate with other robots or humans

This paves the way for much more versatile robots, capable of leaving ultra-controlled environments to operate in complex, changing real-world industrial contexts.

Technological Building Blocks of Physical AI

It relies on the convergence of several key technologies:

-

Advanced sensors

3D vision, lidar, RGB-D cameras, force sensors, tactile sensors, acoustic sensors…

They allow the robot to perceive its environment in detail. -

Artificial intelligence models

Neural networks, multimodal models, decision models, sometimes even LLM-type models adapted for action.

These interpret the situation and choose relevant actions. -

Actuators and mechatronics

Motors, articulated arms, grippers, locomotion systems.

This is what allows AI to turn decisions into physical action. -

Embedded computing and real-time processing

Physical AI requires decisions in milliseconds.

This demands powerful embedded architectures, often hybrid (edge + cloud).

Physical AI, Industrial and Humanoid Robots

Physical AI is now at the heart of two major robotic evolutions.

In industry:

It enables the transition from classic automation to autonomous systems:

-

Robots capable of adjusting their trajectory

-

Self-optimizing production lines

-

Intelligent and corrective maintenance

-

Smooth human–machine interaction

Robots are no longer just executors; they are systems capable of arbitration.

In humanoid robots:

Physical AI is essential for:

-

Balance

-

Handling varied objects

-

Understanding human gestures

-

Navigating human-designed environments

Without physical AI, a humanoid remains a mechanical demo.

With it, it becomes an operational actor.

Why Physical AI Becomes Strategic

Several factors explain the current acceleration:

-

Maturity of AI models

-

Lower sensor costs

-

Powerful embedded computing

-

Industrial pressure on productivity

-

Shortage of skilled labor

-

Need to automate complex tasks

In 2026, physical AI is no longer a lab topic.

It becomes an industrial competitive advantage.

Limits and Challenges

Despite its potential, physical AI still faces major challenges:

-

Safety and reliability of decisions

-

Certification of autonomous systems

-

Integration costs

-

Dependence on data

-

Human acceptance in work environments

AI that acts physically carries new responsibilities.

A software error can now have material consequences.

In a warehouse or factory,

physical AI becomes the

operational conductor.

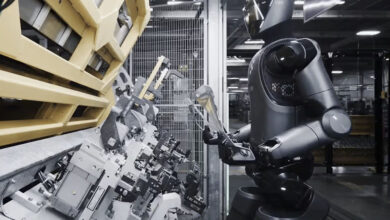

In a European automotive production plant, an autonomous mobile robot equipped with physical AI was deployed to maintain critical assembly lines. Using vibration sensors, high-resolution cameras, and force sensors, the robot continuously patrols the plant, detects anomalies invisible to the human eye (micro-vibrations, abnormal heating, mechanical misalignments), and analyzes them in real time using embedded AI models.

When a risk of failure is identified, the system does not just alert: it adapts its behavior, temporarily slows the affected line, adjusts operational parameters, and can even perform simple corrective actions such as tightening a component or recalibrating a sensor. Result: significantly fewer unplanned stoppages, lower maintenance costs, and better production continuity.

This perfectly illustrates the difference between analytic AI and physical AI: here, AI perceives, decides, and acts directly on the industrial system without immediate human intervention.

Concrete Example: Physical AI in a Logistics Warehouse

In a high-volume e-commerce logistics center, physical AI orchestrates a fleet of autonomous mobile robots for order preparation. Each robot is equipped with cameras, depth sensors, and load sensors, allowing it to navigate partially cluttered aisles, identify packages of varying sizes, and interact safely with human operators.

The AI does not merely follow a predefined route: it analyzes congestion, order priorities, stock availability, and the state of other robots in real time. Based on these parameters, the system dynamically adjusts trajectories, redistributes tasks, and changes the order of order picking. When an unexpected event occurs misplaced pallet, damaged package, sudden order spike the physical AI immediately adapts robot behavior without stopping the flow.

This deployment increases throughput, reduces human error, and handles peaks in demand without massive hiring. Physical AI becomes the operational conductor of the warehouse, transforming logistics into a self-adaptive system.

Toward a New Era of Robotics

Physical AI marks a turning point:

robotics no longer just executes it understands, decides, and acts.

We are entering an era where robots become fully intelligent systems, capable of interacting autonomously and contextually with the real world.

This transformation redefines factories, warehouses, logistics, maintenance, and ultimately our very relationship with machines.

Physical AI is not just another trend.

It is the technological foundation of robotics for the next decade.

FAQ – Physical AI and Its Impact on Robotics

2. How does Physical AI work?

Physical AI operates through a continuous loop that includes perception, understanding, decision-making, and action. Data from sensors is analyzed by AI models, decisions are made in milliseconds, actions are executed through mechanical systems, and feedback is continuously integrated to adjust behavior.

3. How is Physical AI different from traditional robotics?

Traditional robots rely on predefined programs and controlled environments, while Physical AI enables machines to adapt dynamically. These systems can handle uncertainty, learn from experience, adjust movements in real time, and collaborate with humans or other robots.

4. Which technologies enable Physical AI?

Physical AI is built on advanced sensors, artificial intelligence models, mechanical actuators, and real-time embedded computing. The convergence of these technologies allows machines to perceive their environment accurately and respond autonomously.

5. What are the main industrial applications of Physical AI?

In industrial environments, Physical AI enables autonomous production systems, adaptive robotics, predictive and corrective maintenance, and smooth human–machine interaction. In humanoid robotics, it is essential for balance, object manipulation, gesture understanding, and navigation in human-designed spaces.

6. What are the key challenges of Physical AI?

The main challenges include safety, decision reliability, system certification, integration costs, data dependency, and human acceptance. Because Physical AI acts in the physical world, errors can have direct material and operational consequences.

7. Why is Physical AI becoming strategic in 2026?

Physical AI is becoming strategic due to the maturity of AI models, lower sensor costs, increased embedded computing power, industrial productivity pressures, and labor shortages. It represents a shift from automation to intelligent autonomy and is shaping the future of robotics and industrial systems.